Agentic AI

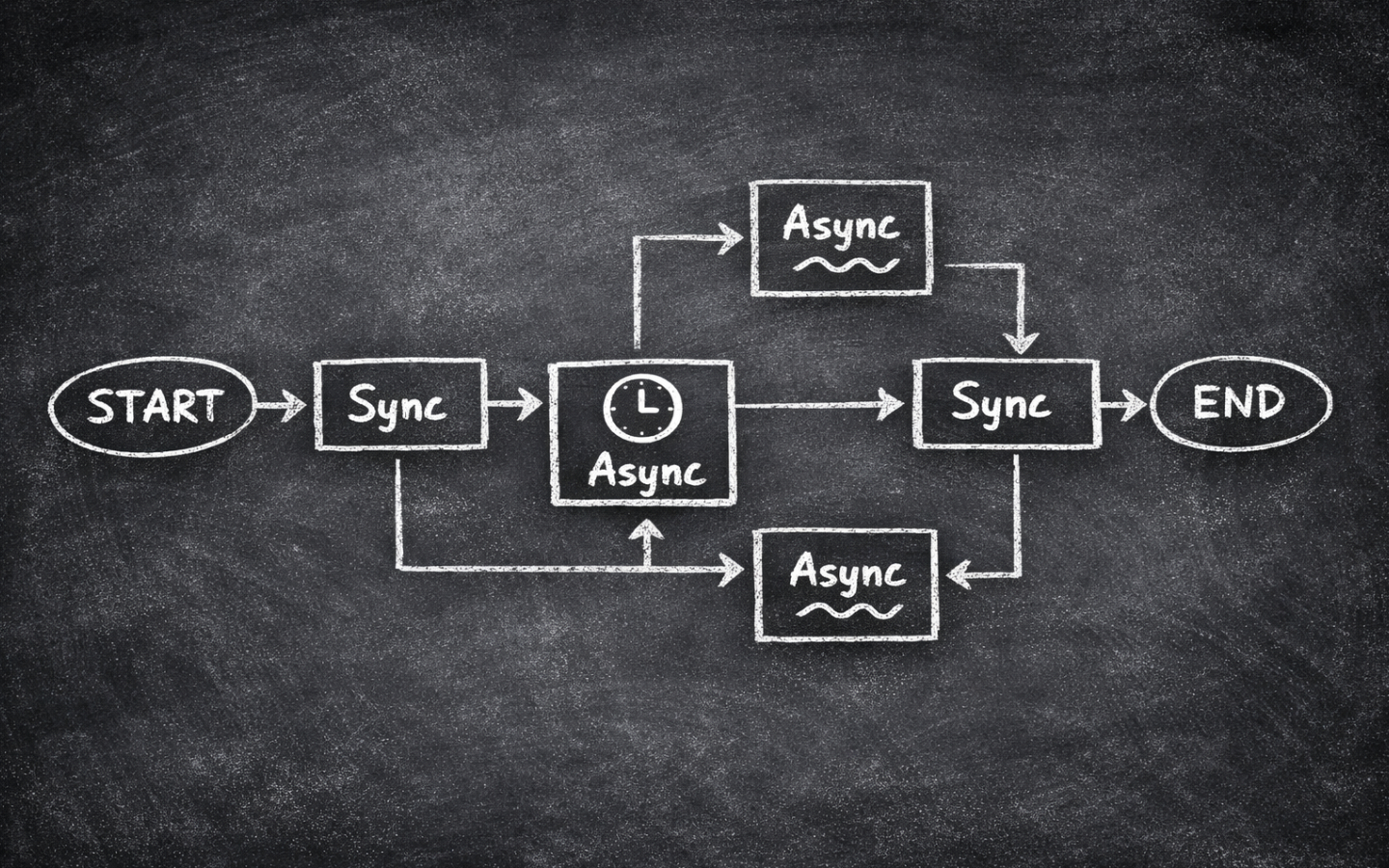

Using Async Effectively in LangGraph

In Seven Tips for Performant Async Python I focused on plain asyncio.

That’s the right place to start, because LangGraph doesn’t replace Python’s event loop or make blocking code magically concurrent.

If an async LangGraph node calls a blocking library, the graph still waits.

If …

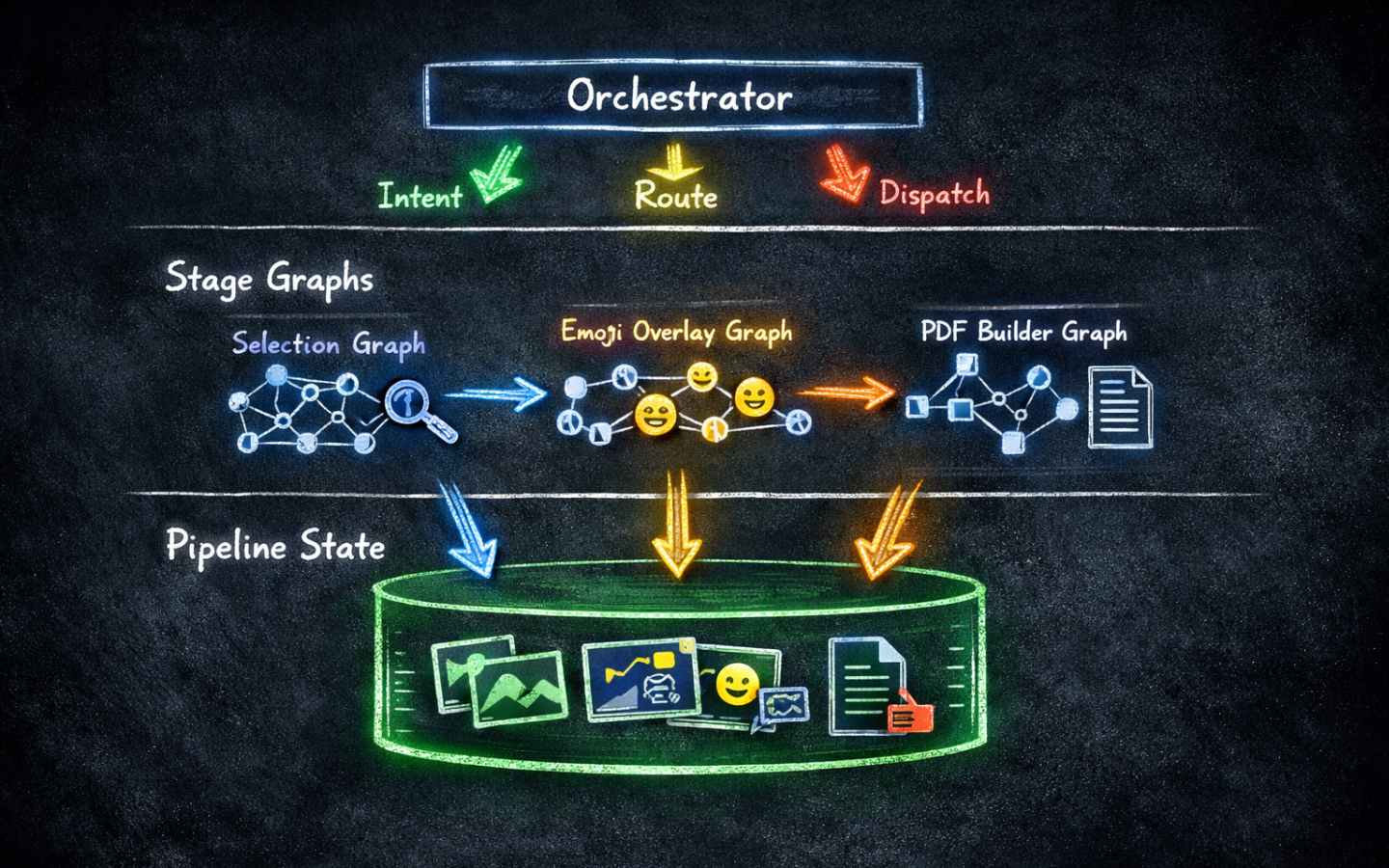

Building a Pipeline in LangGraph

The earlier posts in this series built self-contained graphs: one graph, one task, one run. But real workflows often span multiple stages, where each stage produces output that the next stage needs. The pipeline pattern I describe here isn’t an official LangGraph pattern — it’s an …

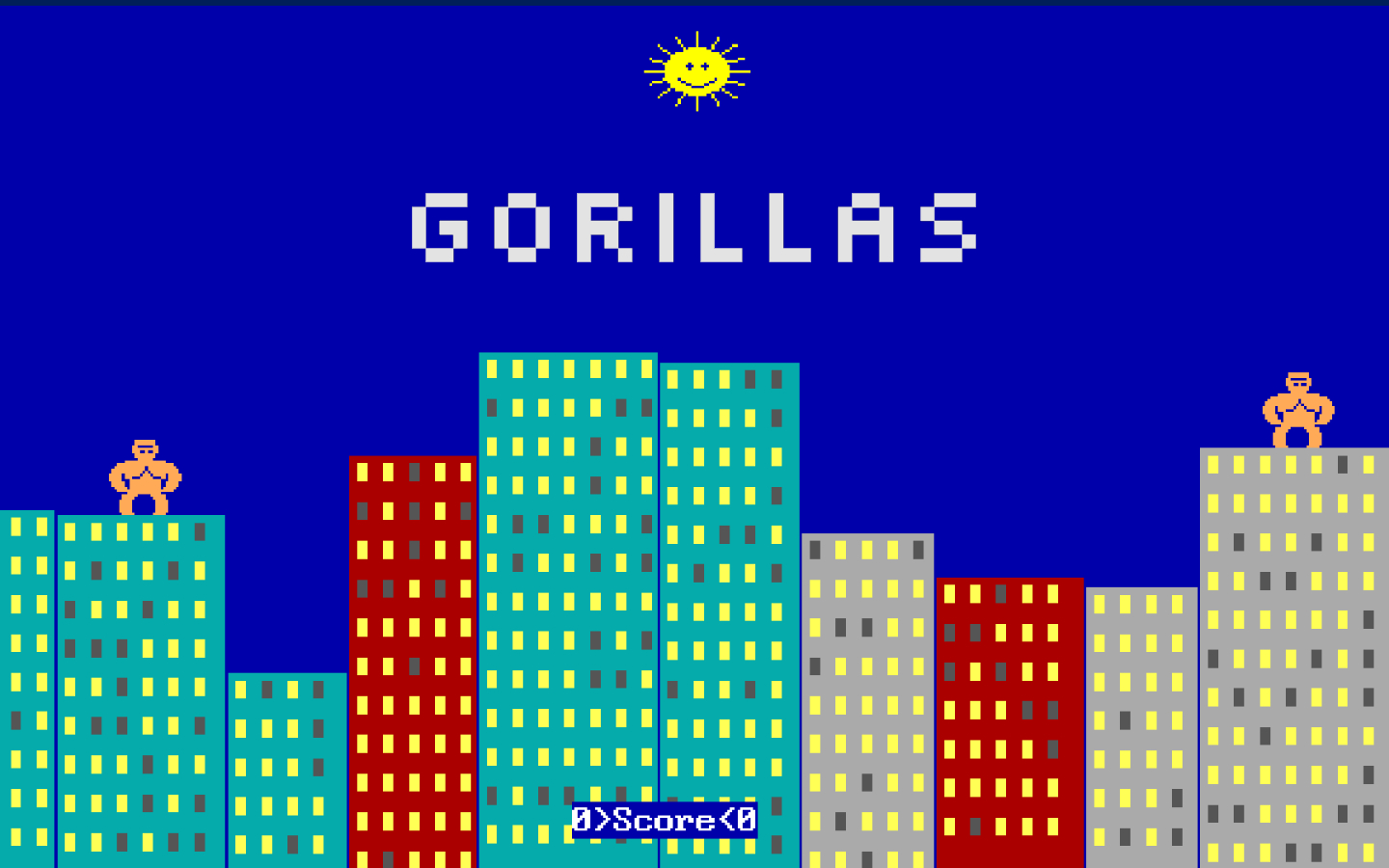

Claude Code vs Codex: Recreating an Iconic Game

If you grew up with a DOS machine in the early 1990s, there is a good chance you remember Gorillas. Two apes perched on rooftops, hurling explosive bananas across a city skyline — it shipped free with QBasic and it was many people’s first taste of programming a game, or at least playing one …

Concurrent Nodes in LangGraph

Real-world agents rarely do one thing at a time. They fetch data from multiple sources, run independent checks in parallel, and combine the results before moving on. LangGraph supports this natively with concurrent nodes, but there is a subtle catch when those nodes all write to the same piece of …

Using Tools in LangGraph

LLMs are impressive, but they are limited to the knowledge baked in at training time and can’t take actions in the world on their own. Tools are what change that. By giving an LLM access to tools, you turn it from a system that is frozen in time into an agent that can look up live data, run …

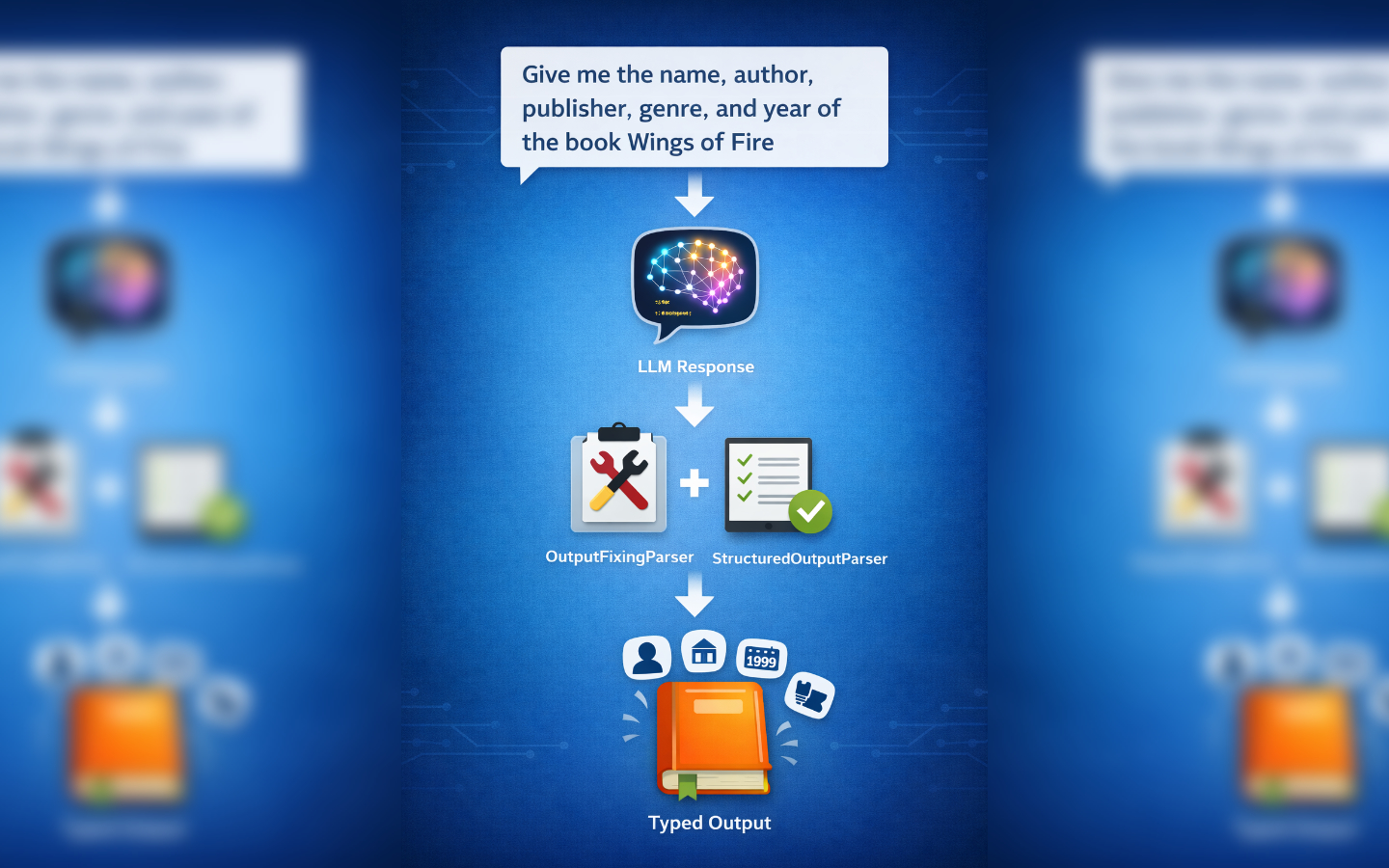

Structured Output in LangGraph

Large language models are incredibly versatile, but when your code depends on predictable data structures, free‑form text can be a headache. The same information can be expressed in countless ways, making downstream processing error-prone. Structured output bridges this gap: by defining a schema, …

Pausing for Human Feedback in LangGraph

Adding a human-in-the-loop step to a LangGraph flow is an easy way to improve quality and control without adding branching or complexity. In this post we will build a tiny three-node graph that drafts copy with an LLM, pauses for human feedback, and then revises the draft, using LangGraph’s …

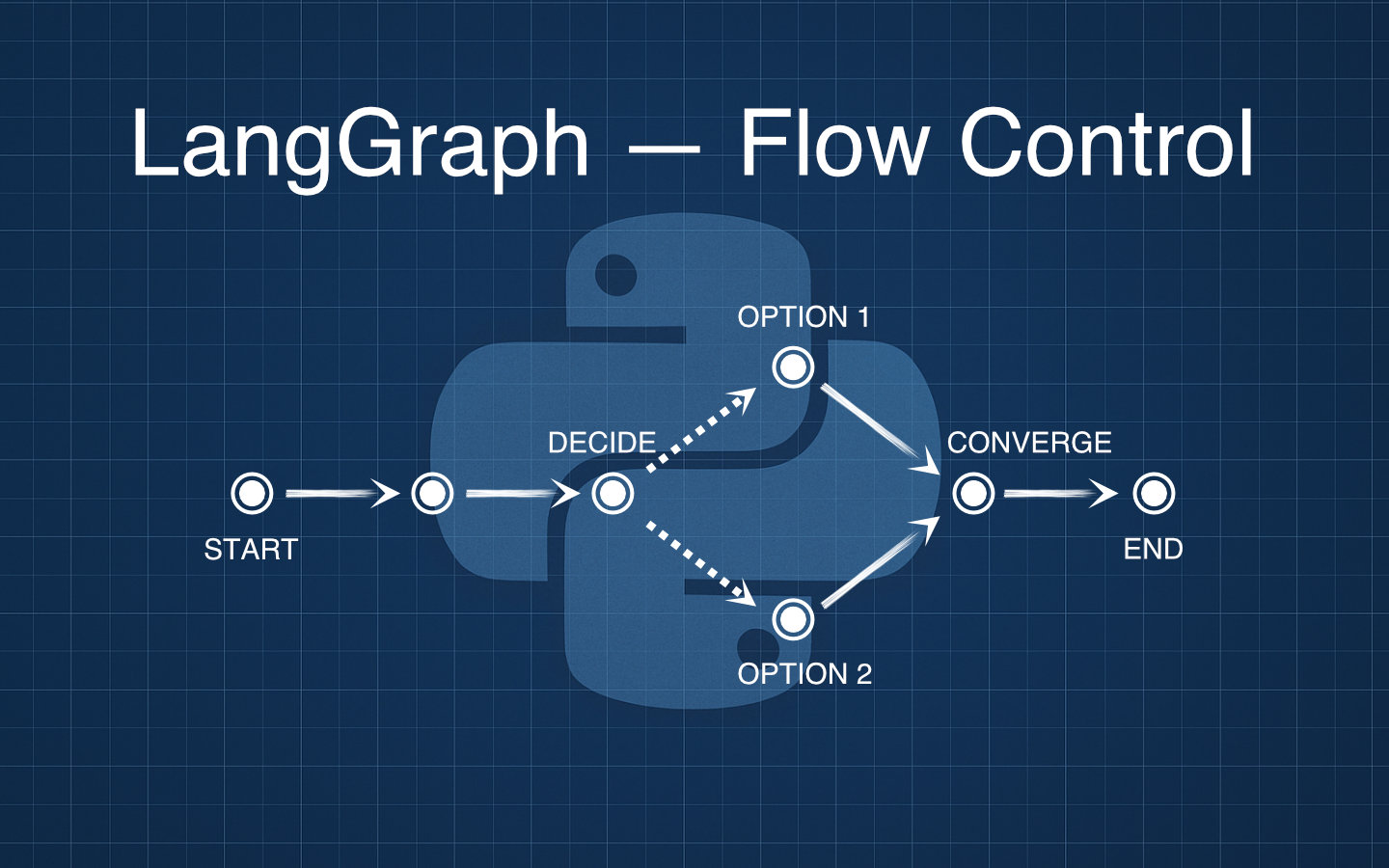

Controlling flow with conditional edges in LangGraph

Conditional edges let your LangGraph apps make decisions mid-flow, so today we will branch our simple joke generator to pick a pun or a one-liner while keeping wrap_presentation exactly as it was.

In the previous post we built a two-node graph with joke_writer and wrap_presentation, and now we will …

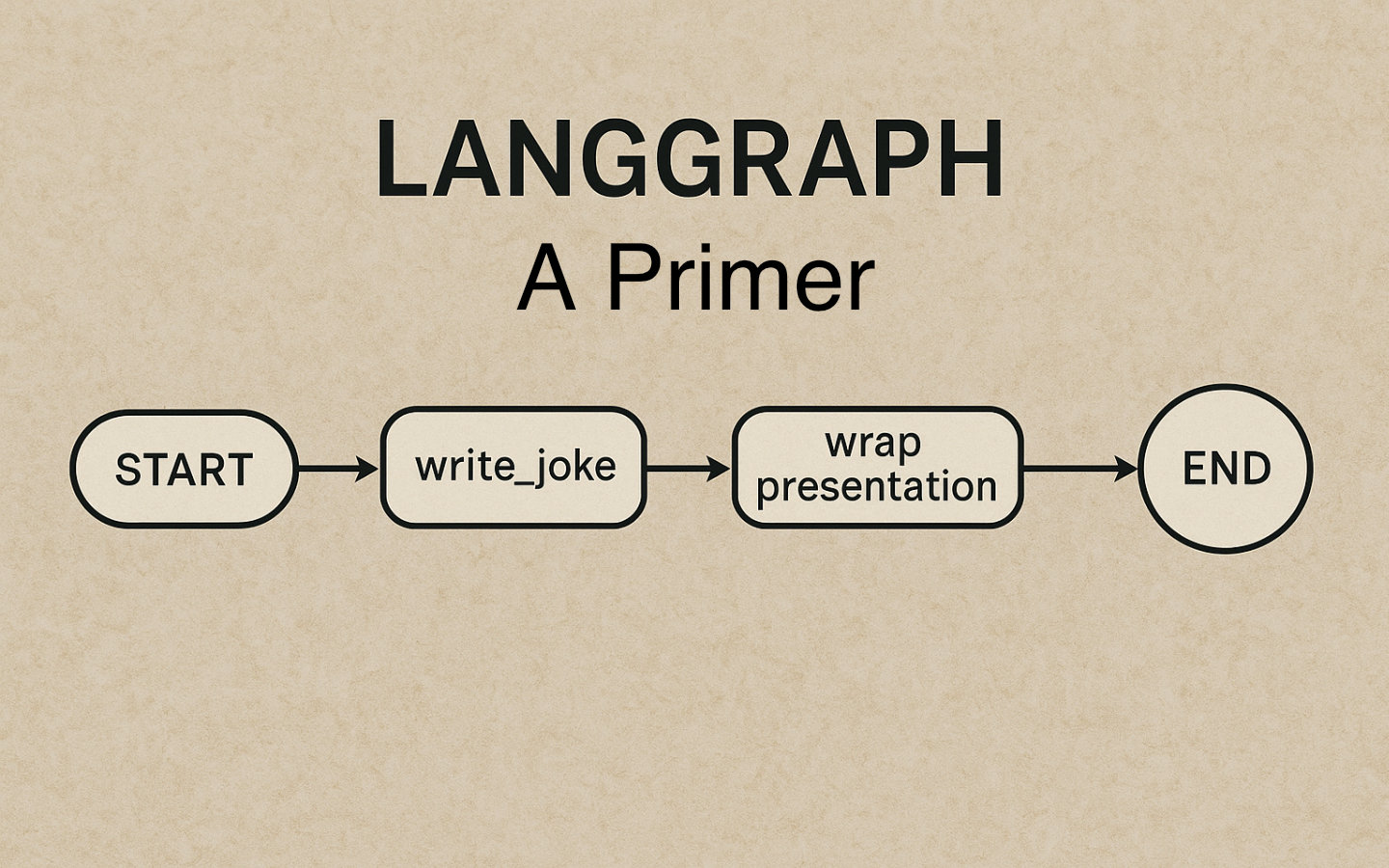

A Primer in LangGraph

LangGraph makes it easy to wire simple, reliable LLM workflows as graphs, and in this post we will build a tiny two‑node graph that turns a topic into a joke and then formats it as a mini conversation ready to display or send.

By the end, you will have a minimal Python project with a typed JokeState …

Using Agentic AI to Get the Most from LLMs

Agentic AI represents a generational leap forward in how artificial intelligence systems operate, moving beyond single, monolithic models to autonomous, goal-oriented agents.

If you missed it, my previous article on how LLMs work under the hood lays the foundation for how large language models …